-

Little Dry Creek Trail

Two weeks ago Saturday I decided I wanted to take a long walk on the weekend. It was only about 5C, but sunny, so I figured it would be fine. I bundled up in my coat and thermal jeans. I wasn’t 100% certain where I would go. I am trying to get to know my…

-

Power walking

No, it is not what you are picturing. I did not walk extra briskly wearing a jogging outfit today. Rather, I walked 1.2 miles to the supermarket and back. The way back is mostly uphill, and I had my backpack full with 10 cans of chickpeas (because they were on sale for 10/$10) and a…

-

Walking

I just moved (back) to Colorado this week and I have been exploring my new neighborhood by walking. One of the nice things about Colorado is that the sun shines a lot, even in the winter, so taking a walk in the afternoon is a great way to get a bit of exercise, since my…

-

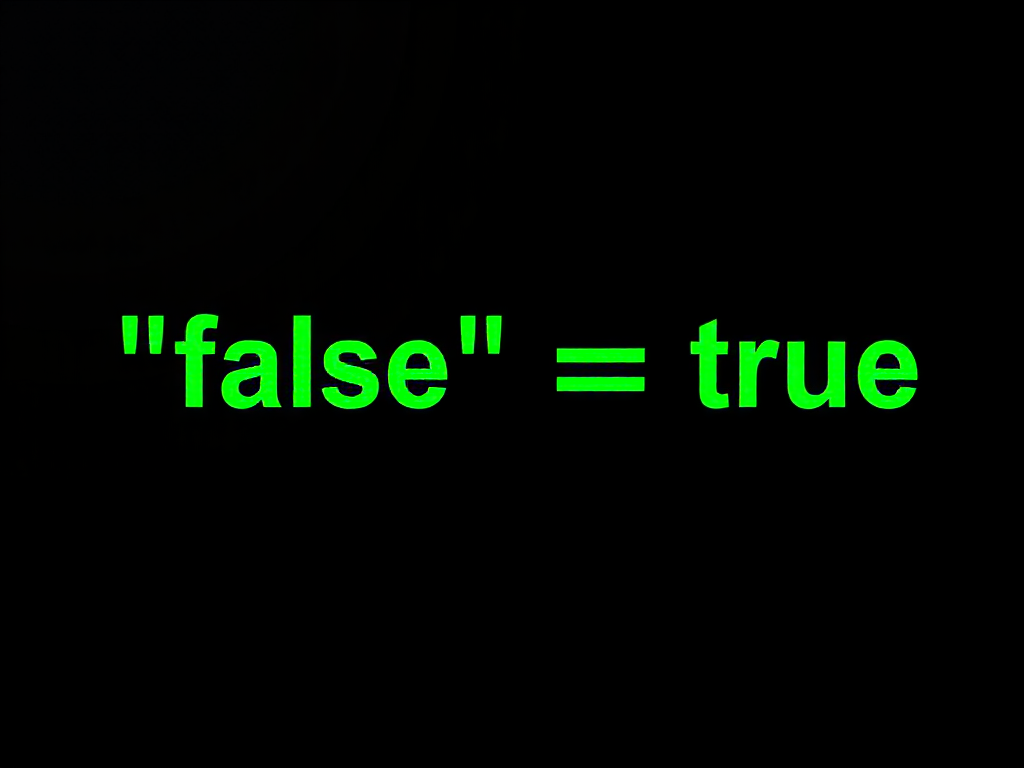

Elasticsearch type gotcha

I recently was working on updating some elasticsearch tests we have for WordPress.com. We have so-called isolated tests, which use a real MySQL database and a real Elasticsearch test cluster. We create sample blogs, posts, and so forth, store them in the database, which triggers our hooks for indexing into Elasticsearch, and then we make…

-

Sunset walk

I am very fortunate to be able to work from home with a very flexible schedule. Last week one day I noticed that it was probably going to be a beautiful sunset, so I took a break and walked to the fields where there is a great view of the sunset. I took several pictures…